Mini Shai-Hulud and the Collapse of Software Provenance Trust

The uncomfortable part about Mini Shai-Hulud is not the malware itself. Credential stealers are everywhere. Obfuscated JavaScript loaders in npm packages are not exactly new territory either. The problem is that this thing successfully rode through trusted publishing infrastructure and valid provenance paths, which means a lot of the security plumbing people have been congratulating themselves over for the last two years did exactly nothing once the attacker crossed the workflow boundary.

That boundary matters.

Once somebody is executing inside the release pipeline, the registry happily distributes the payload for them. Signed. Attested. Published from the correct maintainer namespace. Same GitHub org. Same package names your dependency scanner already approved last quarter and nobody has revisited since because the original reviewer moved to platform engineering.

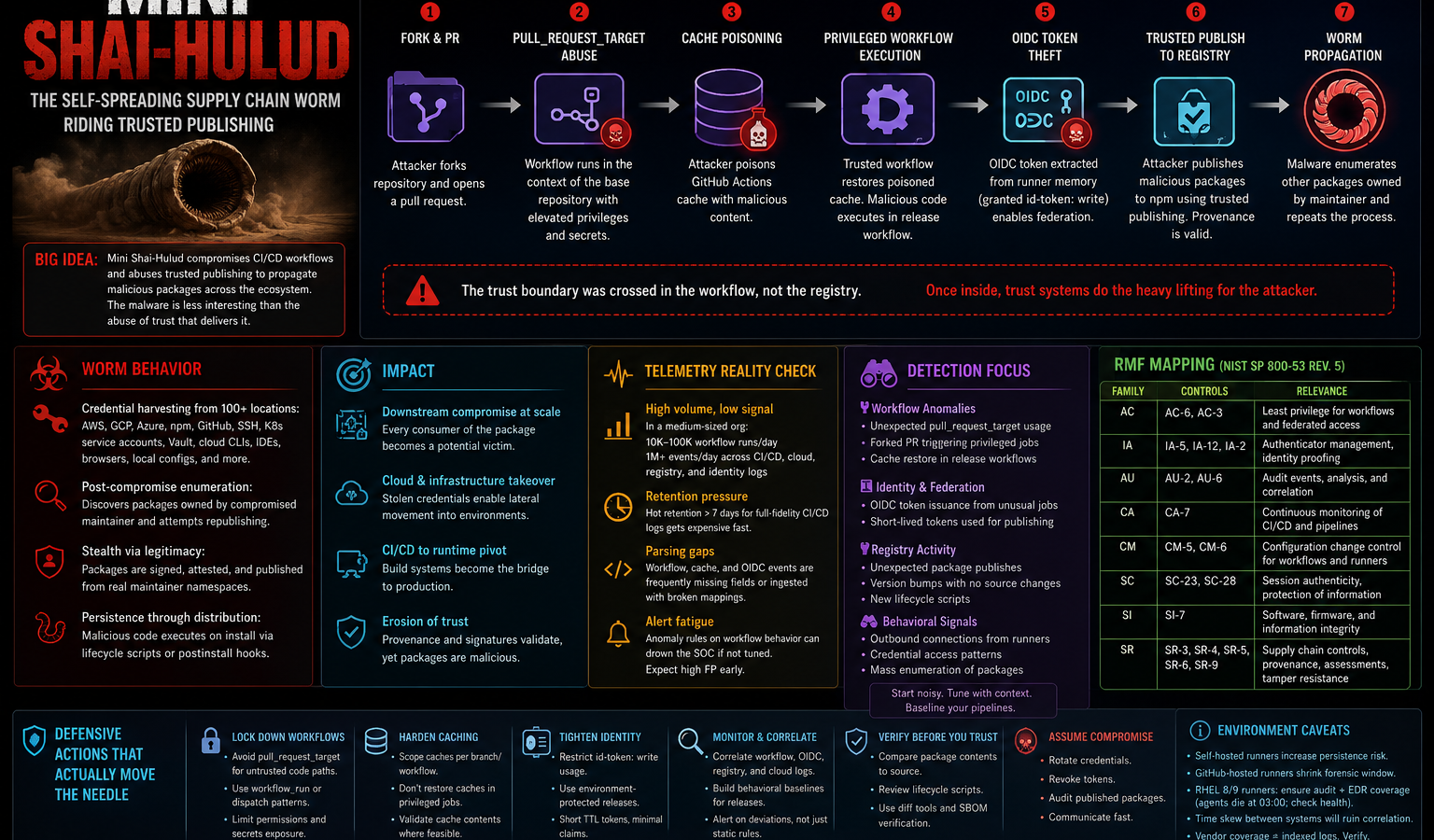

Mini Shai-Hulud is a software supply chain worm in the literal sense. It spreads by stealing publishing authority and reusing that authority to compromise additional packages. Not SMB. Not RDP. Not exposed Jenkins consoles somebody forgot to put behind SSO in 2021. Identity and workflow trust became the transport layer.

The TanStack Incident Changed The Conversation

The TanStack compromise is the cleanest public teardown so far because they actually published enough detail to reconstruct the chain instead of hiding behind “sophisticated actor” language.

The sequence appears to have looked roughly like this:

- attacker abuses a dangerous pull_request_target workflow pattern

- fork-controlled code executes in privileged workflow context

- GitHub Actions cache gets poisoned

- later trusted workflow restores poisoned cache contents

- malicious code executes inside release workflow

- OIDC token extracted from runner memory

- packages published directly to npm through trusted publishing

Look, if your release architecture allows untrusted execution paths to influence trusted cache state, you already lost. You just do not know it yet.

And yes, people keep cargo-culting pull_request_target because GitHub examples and random CI blog posts keep showing it as the “easy” way to handle automation against forks. The docs are incomplete on the risk model. Not technically wrong, just dangerously underspecified in practice.

There is a huge difference between:

“workflow triggered from forks”

and:

“fork-controlled code executes inside privileged context”

People blur those together constantly.

Shared CI/CD Caching Quietly Became A Massive Problem

This is one of those things security teams keep underestimating because cache systems sound operational rather than security-relevant.

They are security-relevant now.

GitHub Actions cache poisoning has been discussed for a while, but most environments still treat caches like harmless build acceleration plumbing. They are not harmless if trust boundaries overlap.

Particularly in environments with:

- monorepos

- self-hosted runners

- shared package-manager caches

- branch reuse patterns

- workflow inheritance

- release pipelines restoring artifacts from partially untrusted jobs

The pattern shows up a lot in npm-heavy shops running Ubuntu 22.04 GitHub-hosted runners with aggressive pnpm caching because cold installs are expensive and people got tired of watching CI times explode after every dependency graph update.

Then somebody restores a poisoned cache into a release job.

And now your provenance pipeline is faithfully signing malware.

Which is exactly what happened here.

Provenance Did Not Fail

This part is important because people are already saying “see, provenance is useless.”

No. The provenance worked.

The attacker operated inside the trusted publishing path, so the provenance accurately reflected the artifact lineage. The system did what it was designed to do. The assumption that provenance equals safety is what failed.

That distinction matters operationally because a lot of security programs are currently treating SLSA provenance like a cryptographic morality certificate instead of a chain-of-custody artifact.

Those are not the same thing.

SI-7 and SR-4 discussions are going to have to mature pretty quickly because modern attacks are increasingly about workflow compromise rather than artifact forgery.

If the release workflow itself is compromised:

- signatures still validate

- attestations still verify

- package lineage still appears legitimate

- hashes still match

- dependency managers still trust the package

The entire downstream ecosystem consumes it anyway.

Honestly, some of the marketing around software attestation has been irresponsible. Vendors keep implying provenance meaningfully answers whether a package is trustworthy. It answers whether the package came from the workflow it claims to come from. Different question.

Very different.

The Worm Characteristics Matter More Than The Payload

The malware behavior itself was pretty standard post-exploitation collection:

AWS creds.

GCP creds.

npm tokens.

SSH keys.

Kubernetes service-account locations.

Vault material.

GitHub auth artifacts.

Developer workstation secrets.

The payload reportedly scraped more than a hundred common credential paths. Which sounds dramatic until you realize half the offensive tooling ecosystem already does that.

The interesting part is propagation.

Once the malware gained publishing capability, it reportedly enumerated packages owned by the compromised maintainer and attempted to publish infected versions of those as well. That turns this from “dependency compromise” into identity-driven lateral movement across package ecosystems.

The registry becomes the terrain.

That lands squarely in SR territory:

- SR-3

- SR-4

- SR-9

And honestly, most RMF packages are still weak here because supply chain discussions tend to collapse into procurement language instead of operational trust modeling.

A package registry is not just a supplier relationship anymore. It is a distributed execution environment with delegated trust inheritance attached to every install command.

Different threat model entirely.

CI/CD Systems Are Production Infrastructure Now

A lot of organizations still mentally classify GitHub Actions, GitLab runners, Jenkins nodes, Azure DevOps pipelines, and artifact repositories as “developer tooling.”

That categorization is outdated.

These systems are now:

- identity brokers

- release authorities

- cryptographic signing environments

- software distribution systems

- trust propagation infrastructure

Treating them like lower-tier support systems under RMF is getting dangerous.

I keep seeing environments with tighter segmentation around a random internal wiki than around the release runners publishing production artifacts to customers.

Meanwhile the GitHub Actions runner has:

id-token: write

broad repo access

artifact signing capability

registry publication rights

cloud federation

…and somebody still allowed arbitrary fork execution paths to interact with the same cache scope.

Cool.

The Detection Side Is Messier Than Vendor Decks Suggest

Everybody suddenly claims they can detect supply chain compromise after incidents like this. Then you open the actual telemetry and realize half the fields are null because the parser broke three agent revisions ago and nobody noticed.

This is where reality kicks in.

If you want meaningful visibility into attacks like Mini Shai-Hulud, you need telemetry from:

- workflow execution

- cache restore/save events

- package publication

- OIDC issuance

- runner process trees

- registry access

- dependency lifecycle execution

- outbound network connections from ephemeral runners

Now scale that across a few thousand builds a day.

The log volume gets ugly fast, especially if you are retaining GitHub audit streams, CloudTrail, Kubernetes audit logs, and Sysmon for self-hosted runners all in the same Splunk environment with hot retention longer than seven days. Somebody from finance eventually notices the bill.

Then comes sampling.

Then comes selective retention.

Then comes “temporary” parser suppression rules.

Then comes alert tuning.

And eventually your detection engineering team quietly disables the noisiest workflow anomaly rules because every release engineer in the company started opening Sev2 tickets over false positives.

The vendor demos never include that part.

Also — if you are running ephemeral GitHub-hosted runners, your forensic window is tiny. Tiny. Once the runner dies, good luck reconstructing volatile process state unless you already had collection in place.

Which most shops do not.

OIDC Changed The Blast Radius

Trusted publishing through OIDC is still generally better than long-lived registry tokens. I do not think this incident changes that.

But it absolutely changes the operational assumptions around workflow identity.

A lot of environments currently hand out id-token: write permissions too broadly because the permission itself sounds harmless. People treat it like enabling federation capability instead of enabling credential minting authority.

That is a mistake.

If attacker-controlled code executes inside a workflow capable of minting trusted credentials, the attacker effectively inherits downstream publishing authority.

Not permanently. Briefly.

Briefly is enough.

Particularly because modern release systems automate everything after authentication succeeds.

The thing is, ephemeral credentials reduce persistence risk. They do not reduce abuse impact during active compromise. Those are separate security properties.

IA-5 helps with credential management.

AC-6 still decides whether the workflow should have had that authority in the first place.

AI Tooling Keeps Showing Up In These Incidents For A Reason

Mistral AI and Guardrails AI showing up in reporting is not random.

AI tooling ecosystems have accumulated a pretty dangerous mix of characteristics:

- massive dependency chains

- extremely high release velocity

- developer convenience-first defaults

- broad token exposure

- local workstation execution

- terminal access

- cloud API integration

- aggressive plugin ecosystems

A lot of these environments effectively normalized “run arbitrary helper code locally” before anybody stopped to think through the implications.

And yes, traditional developer ecosystems already had that problem too. AI tooling just accelerated it.

Especially in Python environments.

Anybody who has untangled a polluted pip dependency tree inside a shared ML notebook environment already knows exactly how ugly this gets.

Particularly in Kubernetes-heavy ML stacks where service-account sprawl already borders on parody.

What To Actually Go Check

If you are trying to figure out exposure, start with package versions and workflow behavior before you go hunting for exotic malware indicators.

Open the workflows.

Search for:

pull_request_target

id-token: write

shared cache restore logic

release jobs restoring artifacts from earlier workflows

Then look at package publication history around May 2026.

On the endpoint side:

- grep shell history for unexpected npm installs

- inspect .npmrc

- review GitHub CLI auth state

- check ~/.aws, ~/.config/gcloud, Kubernetes config paths

- inspect unusual outbound connections from build runners

- look for lifecycle script execution during package install

If you have Sysmon coverage on self-hosted Linux runners — uncommon, but some FedRAMP High shops do — process ancestry around node execution during release jobs becomes useful fast.

Assuming the runner actually forwarded logs correctly, which on older RHEL 8 images running custom Fluent Bit pipelines is honestly not a safe assumption.

The Real Lesson

Mini Shai-Hulud did not bypass trust systems.

It operated inside them long enough that the trust systems distributed the payload on its behalf.

That is a much harder problem than fake packages and typo-squatted dependencies.